Developing Test Ideas: Powering Smart SaaS Growth

Trying to increase conversions without technical headaches can feel like searching for hidden shortcuts in a crowded marketplace. A/B testing offers a straightforward and scalable way for digital marketers to make smarter decisions, comparing two variations to see which drives results. With a focus on data-driven decision making and no-code solutions, this guide shows how to generate smart test ideas, prioritize what matters, and avoid common mistakes that weaken marketing experiments.

Table of Contents

- Defining Test Ideas In A/B Marketing

- Proven Methods For Brainstorming Test Ideas

- Prioritizing And Validating Experiment Concepts

- Real-World SaaS Use Cases And Inspiration

- Common Pitfalls And Mistakes To Avoid

Key Takeaways

| Point | Details |

|---|---|

| A/B Testing Fundamentals | Systematic validation of marketing elements through variations helps in making data-driven decisions. |

| Effective Brainstorming Techniques | Structured brainstorming sessions enhance idea generation and improve test hypothesis formulation. |

| Prioritizing Experiments | Evaluate potential experiments based on business impact, complexity, and strategic alignment for effective execution. |

| Learning from Failures | Treat each A/B test as a learning opportunity to refine strategies and improve future experiments. |

Defining Test Ideas in A/B Marketing

A/B testing represents a powerful experimental approach that enables marketers to systematically validate design, messaging, and user experience hypotheses. Digital experimental design allows businesses to make data-driven decisions by comparing two variations and measuring their relative performance.

At its core, A/B testing involves creating strategic variations of marketing elements to understand which version resonates most effectively with target audiences. These variations can include:

- Headline wording

- Call-to-action button colors

- Page layout designs

- Image selections

- Form field arrangements

- Pricing presentation formats

The fundamental principle behind A/B testing is straightforward: randomly split your audience into two groups and expose each to a different version of the marketing element. Split testing methodologies help marketers understand nuanced user preferences by tracking key performance metrics like conversion rates, click-through rates, and engagement levels.

Successful A/B testing requires careful planning and precise execution. Marketers must develop testable hypotheses that address specific business objectives, whether improving user experience, increasing conversion rates, or optimizing customer acquisition strategies. Effective test ideas typically emerge from a combination of data analysis, user feedback, and creative problem-solving.

Pro tip: Start your A/B testing journey by identifying your most critical conversion points and developing hypotheses that could incrementally improve performance.

Proven Methods for Brainstorming Test Ideas

Creating innovative A/B testing strategies requires structured yet creative brainstorming techniques that systematically generate and refine potential test ideas. Brainstorming techniques provide a critical framework for transforming scattered marketing hypotheses into actionable experimental designs.

Successful test idea generation typically involves multiple collaborative approaches:

- Mind mapping marketing challenges

- Analyzing user behavior data

- Reviewing conversion funnel bottlenecks

- Conducting customer feedback surveys

- Examining competitor marketing strategies

- Exploring psychological triggers in user experience

Teams can leverage structured brainstorming methods to maximize idea generation. Structured brainstorming techniques encourage participants to build upon each other's concepts, creating a collaborative environment that promotes innovative thinking. The key is to generate a high volume of ideas without immediate judgment, allowing creative exploration before critical evaluation.

Effective brainstorming for A/B testing requires a systematic approach that balances creativity with strategic thinking. Marketers should focus on developing testable hypotheses that address specific business objectives, such as improving conversion rates, reducing bounce rates, or enhancing user engagement. By combining quantitative data analysis with creative problem-solving, teams can uncover unique testing opportunities that drive meaningful improvements.

Pro tip: Create a dedicated brainstorming session with cross-functional team members to generate diverse perspectives and uncover innovative A/B testing ideas.

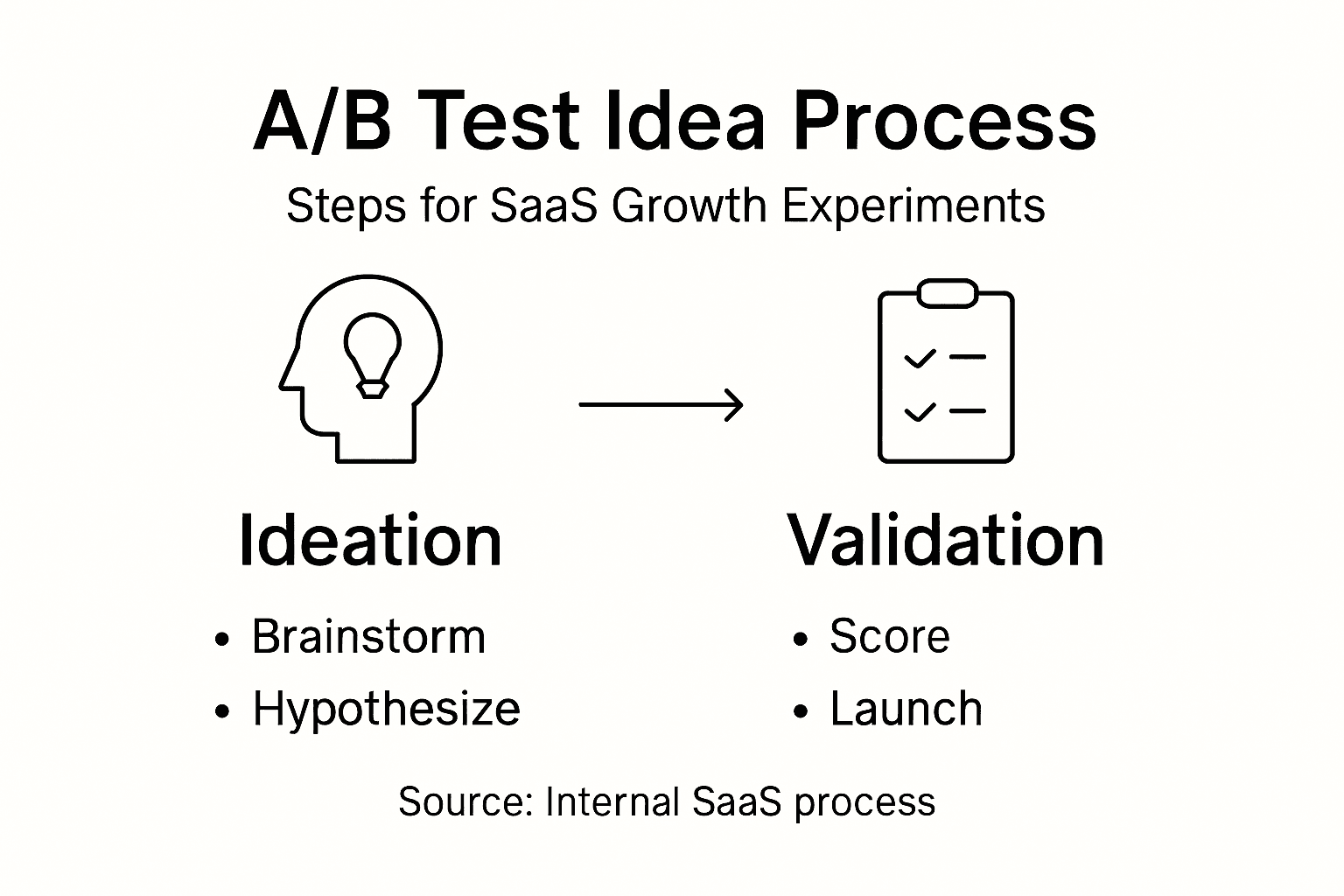

Prioritizing and Validating Experiment Concepts

Growth experimentation strategies require a systematic approach to transforming innovative ideas into actionable business improvements. Successful SaaS teams must develop a robust framework for identifying, evaluating, and prioritizing potential experiments that drive meaningful results.

Effective experiment prioritization involves multiple critical considerations:

- Potential business impact

- Implementation complexity

- Resource requirements

- Estimated time to results

- Alignment with strategic objectives

- Potential risk and downside scenarios

- Measurability of outcomes

Hypothesis development becomes crucial in this process. Marketers must craft precise, testable statements that connect specific changes to expected outcomes. Product management validation practices emphasize the importance of grounding these hypotheses in actual user needs and business goals, ensuring that each experiment represents a strategic opportunity for growth.

The validation process demands a structured methodology that balances quantitative analysis with qualitative insights. Successful teams use multi-dimensional evaluation frameworks that score potential experiments across different dimensions, creating a comprehensive view of each concept's potential value. This approach prevents teams from pursuing low-impact ideas and focuses resources on experiments with the highest likelihood of driving meaningful business improvements.

Here's a comparison of key considerations when prioritizing A/B test experiments:

| Criteria | Why It Matters | Typical Impact |

|---|---|---|

| Business Impact | Drives revenue or user growth | High priority experiments |

| Implementation Complexity | Assesses technical difficulty | Guides resource allocation |

| Measurability | Enables tracking of clear results | Ensures actionable insights |

| Strategic Alignment | Supports overall business goals | Maximizes long-term benefits |

Pro tip: Create a standardized experiment scoring matrix that objectively ranks potential tests based on impact, effort, and strategic alignment.

Real-World SaaS Use Cases and Inspiration

SaaS case studies offer powerful insights into practical strategies for overcoming growth challenges and driving meaningful business transformation. These real-world examples provide marketers and product teams with actionable inspiration for developing innovative testing and optimization approaches.

Some compelling areas of SaaS experimentation include:

- Onboarding flow optimization

- Pricing model variations

- Feature discoverability improvements

- User interface redesigns

- Customer engagement triggers

- Conversion funnel refinements

- Personalization strategies

Strategic innovation often emerges from carefully analyzed use cases. Successful SaaS platforms demonstrate how companies like Slack, Zoom, and Shopify have leveraged strategic testing to address core customer needs and create scalable growth models. These examples reveal that continuous experimentation and user-centric design are fundamental to sustained SaaS success.

By studying diverse use cases, teams can uncover nuanced insights about user behavior, product performance, and strategic optimization. The most effective organizations treat each experiment as a learning opportunity, using data-driven insights to incrementally improve their product offerings and user experiences. This approach transforms A/B testing from a tactical exercise into a strategic growth mechanism.

Pro tip: Develop a systematic approach to documenting and learning from each A/B test, creating an internal knowledge base that captures both successful and unsuccessful experiment outcomes.

Common Pitfalls and Mistakes to Avoid

SaaS development mistakes can derail even the most promising marketing experiments and product strategies. Understanding these common pitfalls is crucial for creating robust, effective testing approaches that drive meaningful business growth.

Critical mistakes to avoid in A/B testing include:

- Insufficient sample size leading to statistically insignificant results

- Testing too many variables simultaneously

- Ignoring statistical significance thresholds

- Confirmation bias in hypothesis development

- Premature scaling of experimental findings

- Neglecting qualitative user feedback

- Failing to document experimental processes

Experimental design requires meticulous attention to detail and a systematic approach. SaaS testing challenges highlight the complexity of creating reliable testing environments that account for multi-tenancy, diverse user bases, and rapidly changing technological landscapes. Successful teams recognize that rigorous testing is not about eliminating all potential errors, but about creating robust frameworks that can identify and mitigate risks effectively.

The most successful organizations approach A/B testing as a continuous learning process. They view each experiment not as a pass/fail scenario, but as an opportunity to gain deeper insights into user behavior, product performance, and strategic optimization. This approach transforms potential mistakes into valuable learning experiences that incrementally improve product development and marketing strategies.

This summary outlines common SaaS A/B testing pitfalls and how to avoid them:

| Pitfall | Consequence | Prevention Strategy |

|---|---|---|

| Small Sample Size | Unreliable outcomes | Set statistical thresholds |

| Too Many Variables | Confused results | Test one variable at a time |

| Confirmation Bias | Skewed findings | Use objective hypothesis frameworks |

| Neglecting Documentation | Lost learning | Maintain structured experiment records |

Pro tip: Create a comprehensive testing post-mortem template that captures both successful and unsuccessful experiment outcomes, ensuring systematic knowledge retention across your organization.

Drive Smarter SaaS Growth with Stellar’s A/B Testing Solution

Developing effective test ideas and prioritizing experiments is critical for meaningful SaaS growth. The article highlights common challenges such as building testable hypotheses, avoiding pitfalls, and turning data into actionable insights. If you want to overcome these hurdles and power your growth experiments with confidence you need a tool designed for speed simplicity and real-time analytics.

Stellar is tailored for marketers and growth hackers at small to medium-sized businesses who want to streamline their experimentation process. With a lightweight script size of just 5.4KB and a no-code visual editor you can quickly create and launch A/B tests without technical complexity. Advanced goal tracking and dynamic keyword insertion help you measure impact precisely adhering to the validation and prioritization methods discussed in the article.

Ready to transform your test ideas into measurable growth outcomes Start optimizing your SaaS journey today with Stellar’s powerful A/B testing platform Visit Stellar now to explore how you can build effective experiments fast and see your growth accelerate. Learn more about our A/B Testing Tool, and how our Visual Editor simplifies test creation so you can focus on what matters most.

Frequently Asked Questions

What is A/B testing in SaaS marketing?

A/B testing in SaaS marketing is a method where two variations of a marketing element are compared to determine which performs better based on user responses. This systematic approach helps validate design, messaging, and user experience hypotheses.

How do I generate effective test ideas for A/B testing?

Generating effective test ideas involves techniques like mind mapping, analyzing user behavior data, reviewing feedback, and examining competitor strategies. Collaborating with cross-functional teams can also enhance the brainstorming process.

What key factors should I consider when prioritizing A/B test experiments?

When prioritizing A/B test experiments, consider potential business impact, implementation complexity, resource requirements, estimated time to results, alignment with strategic objectives, and measurability of outcomes.

What are common mistakes to avoid in A/B testing?

Common mistakes in A/B testing include using insufficient sample sizes, testing too many variables at once, ignoring the need for statistical significance, and failing to document experimental processes. Avoiding these pitfalls ensures more reliable results.

Recommended

Published: 2/16/2026